Quantum #107

Issue #107 of the weekly HPC newsletter by HMx Labs. Slurm got a bit of attention this week from Nvidia and the analysts but I think the bigger picture here is being missed. AWS gave us another way to abuse S3 and the DOE should probably talk to CERN.

Nvidia dropped a technical paper last week delving into topology aware scheduling on rack scale GPU clusters. Subsequent to their acquisition of Slurm, which now seems truly integrated into Mission Control, I think this is the first in depth guide from Nvidia on how to do this. A sign of further investment to come into the Slurm ecosystem?

It came though, in the same week as a piece from Reuters, contributed to by Intersect360 on the hazards of Nvidia’s Slurm acquisition. Aside from highlighting that the concerns around this have not dissipated I don’t think it really said anything new or wasn’t said back in December last year at the time of the acquisition. In the intervening time though something else substantial has happened and I think worrying about the impact of Nvidia’s acquisition of Slurm is almost missing the wood for the trees. We are now in an environment where the use of AI in coding is having a massive impact on open source software and communities around it. Everything from maintainers drowning in AI Slop PRs to AI washing source code (to change its license) to simply more easily forking and maintaining your own versions of popular open source projects. (Not a fork, but extensions like S9S are a good example for Slurm).

While our own work confirms (at least to me) we aren’t going to see a vibe coded Slurm replacement any time soon, it also shows that forking Slurm and adapting it to your own specific use cases isn’t the challenge it once was either. My concerns around how Slurm evolves under Nvidia’s ownership remain, but honestly, I have other bigger concerns around FOSS more generally. There also isn’t a shortage of alternatives to Slurm.

In the classical HPC space the US DOE released SYNAPSE-I to handle real time data processing at HPC scale. On reading this I did wonder if they had spoken to CERN who face similar challenges, but I guess with geopolitics being the way they are right now that probably isn’t going to happen.

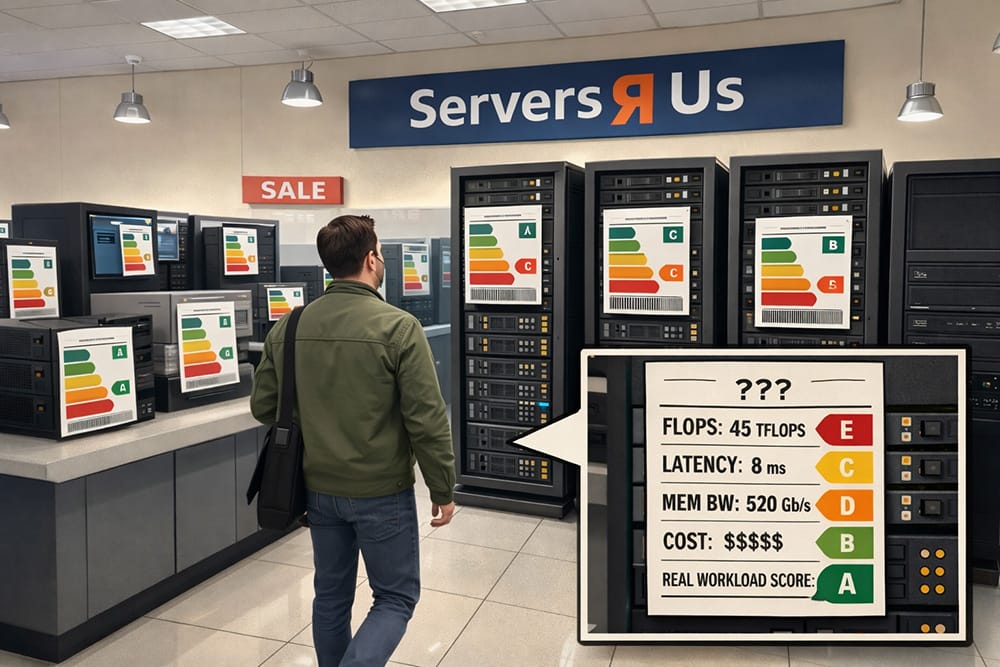

Our own news from last week: If you haven’t already told us, let me know what benchmarks you’d like to see in our data to make your infrastructure selection decisions. Also, the piece on LLM inference as Monte Carlo paths seems to have resonated with a few of you and is worth a read if you haven’t already.

Oh, and AWS gave us a real filesystem on top of S3. Given the number of HPC workloads already using S3 I give it all of 4 femtoseconds before the first HPC workload attempts to that too.

In The News

Updates from the big three clouds on all things HPC.

Reuters and Intersect360 worry about Nvdia's Slurm acquisition.

and our original written at the time:

DOE goes real time with data analysis at HPC scale

From HMx Labs

If using AI reliably means gating its output on automated validation, are we just aiming for LLM inference as Monte Carlo paths?

Want to help drive the direction of FLOPx and make sure we have benchmark data that you care about?

Know someone else who might like to read this newsletter? Forward this on to them or even better, ask them to sign up here: https://cloudhpc.news