Quantum #109

Issue #109 of the weekly HPC newsletter by HMx Labs. Cerberas files for an IPO, Google gives us TPU v8, Meta adopts Graviton, Bolt Graphics tapes out their first GPU and we try and make workloads a tiny bit more portable.

Cerberas decided to attempt another IPO last week while Bolt Graphics taped out its first GPU in an attempt to reclaim some of the vacant space in the FP64 space left by Nvidia’s all while Google also announced its 8thgeneration TPUs. The market for accelerated computing certainly seems to be a lot diverse than it ever has been in the past and it will be interesting to see how many, if any, of these alternatives to Nvidia’s CUDA ecosystem manage to gain a significant foothold.

I would say the choice is nice to have, but the reality is we can barely even swap across CPU architectures in many domains (more on this in a bit) let alone across the significantly more difficult accelerated computing domains. For now the choice may possibly give us a more competitive market (and even then I’m not so sure) but for most people it will still mean picking a direction and sticking with it for a number of years if not decades.

The timing of Cerberas’ IPO is also interesting, being as it would, the first major AI IPO this year. Are they trying to get out ahead of OpenAI and Anthropic who leave about as much liquidity as there is in the Sahara?

Meta announced a partnership with AWS and adoption of Graviton CPUs amidst increasing chatter that we’re running short of CPU capacity too now. Allegedly this is because agentic workflows demand more CPU capacity and are seeing GPU to CPU ratios collapse to close to 1:1 instead of the current 2:1 or even 4:1. I don’t buy it. At least not for CPUs collocated with GPUs. It simply makes no sense to burden a GPU machine with anything other GPU workloads. You’d architect any system at scale to offload CPU only compute elsewhere. And quite frankly I don’t buy that it’s even CPU bound at all. It’s more than likely IO bound. I’m sure Intel/AMD/ARM would love to make more money on their CPUs, and this is a useful narrative, but I don’t for a minute think it’s true. At least not for the reasons being proposed.

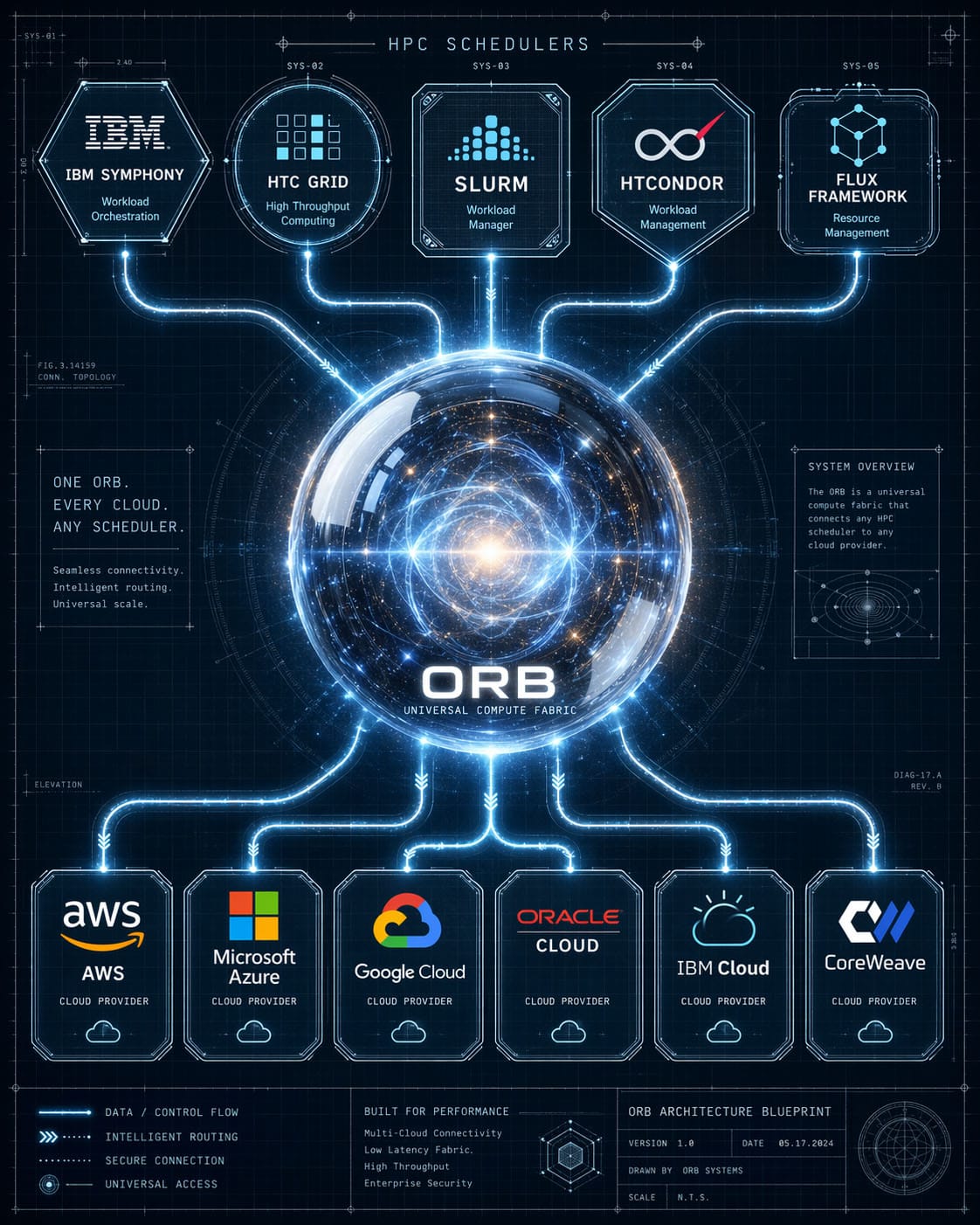

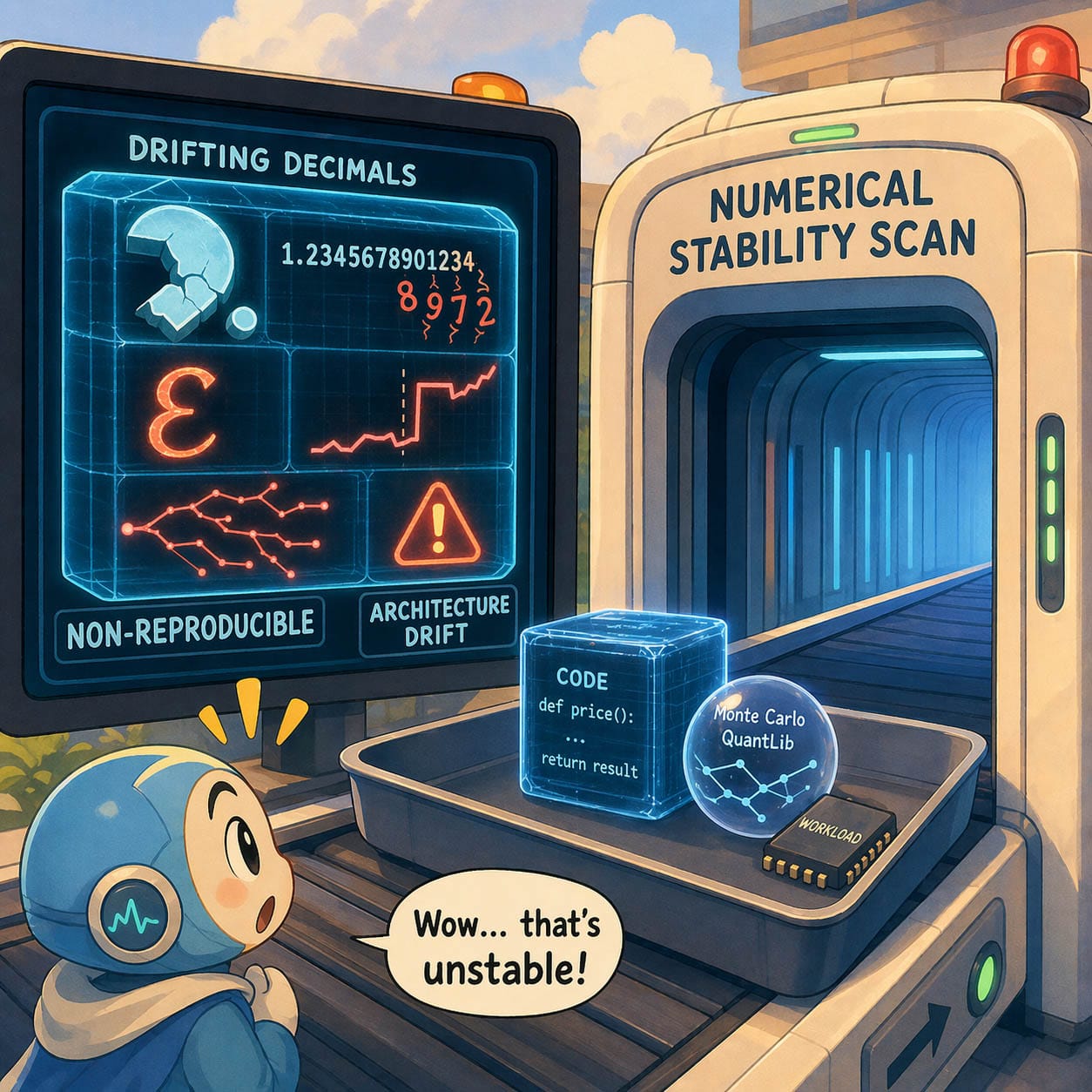

In our own news, we’ve moved the needle a little in making compute workloads more portable both across clouds and CPU architectures last week. Well technically it happened over a longer period, but we talked about it last week! Checkout FINOS ORB and our work on automating resolving numerical stability challenges when moving CPU architectures.

In The News

Updates from the big three clouds on all things HPC.

Bolt Graphics provides an FP64 alternative to Nvidia GPUs

https://www.hpcwire.com/2026/04/22/bolt-graphics-targets-fp64-hpc-workloads-with-zeus-gpu/

Cerberas tries for IPO again. First of the AI IPOs this year?

We need more CPUs for AI? I don’t buy it. This is a lazy analysis

Meta still on a spending spree for hardware, this time Graviton CPUs

From HMx Labs

The end game is to make HPC workloads more portable, so we had a couple of small wins in that space last week. Progress in running across different clouds and CPU architectures.

Know someone else who might like to read this newsletter? Forward this on to them or even better, ask them to sign up here: https://cloudhpc.news