Using Waltz to Assess Cloud Readiness Across an Application Portfolio

In the previous post we argued that cloud migration is, at its core, an architecture visibility problem rather than an infrastructure problem. The accompanying case study showed how Waltz was used to give a large enterprise a connected, evidence-based view of its application estate, and to answer the questions that actually drive migration outcomes:

· Which applications are ready to move

· Which should be retained, retired, replaced, or re-architected

· How readiness should shape the migration plan.

This post is the practical follow-on. How do you build a cloud readiness model inside Waltz, score applications consistently, and turn the result into something a migration programme can execute against?

The short answer is that readiness is not a single score. It is a structured judgement made up of several dimensions, each of which needs to be defined, captured, and rated in a way that survives contact with a real portfolio.

Readiness is multi-dimensional, not a single number

A common failure mode in early cloud planning is to reduce readiness to a single technical question: can this application run in the cloud? That question is too narrow. It tells you nothing about whether the application should move, when it should move, or how.

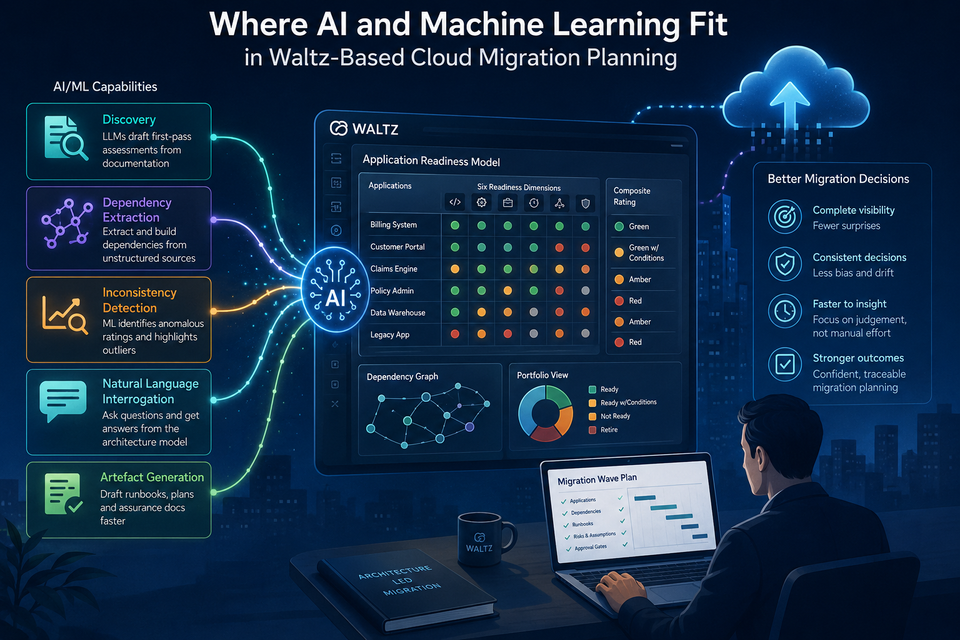

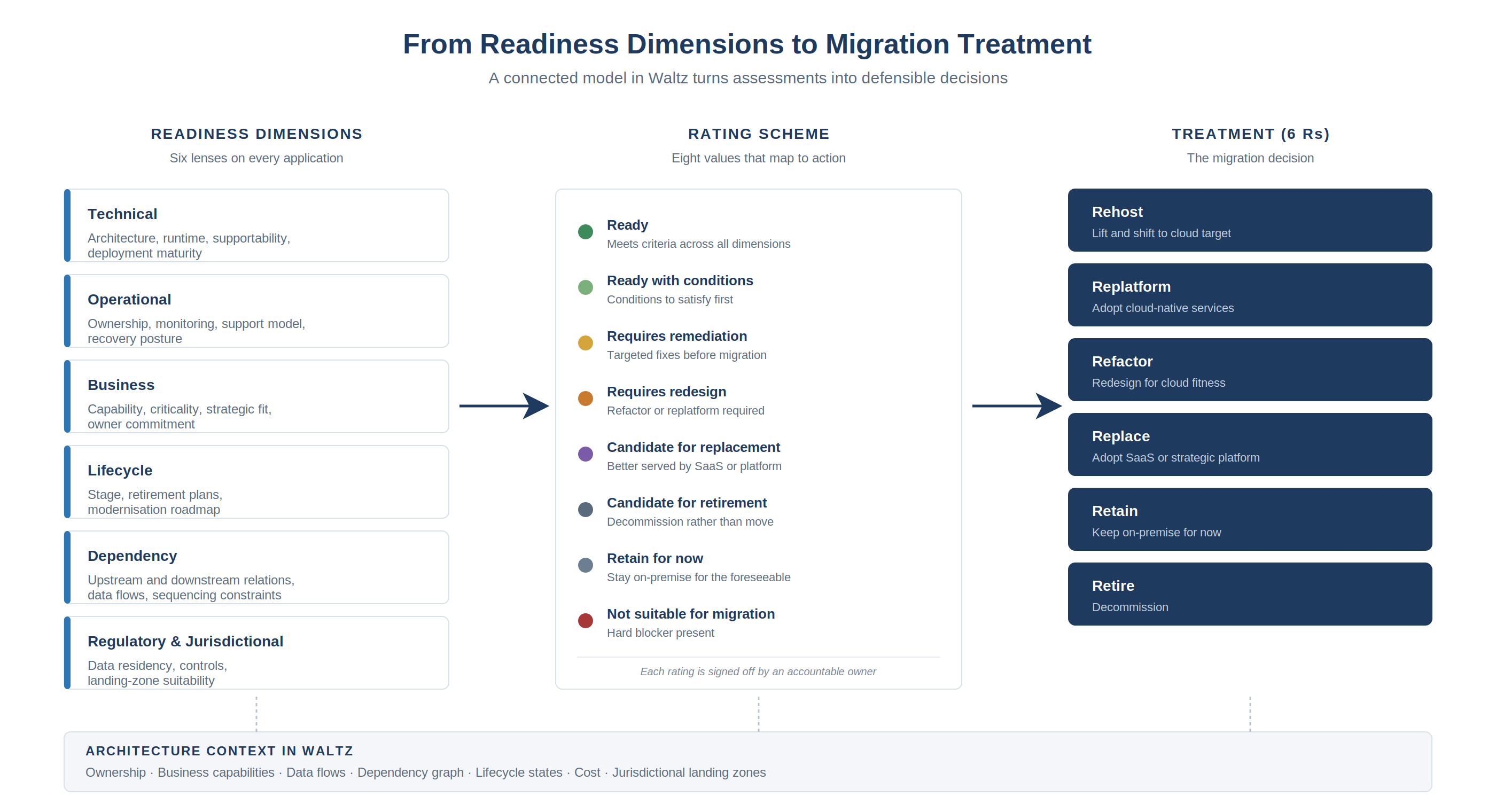

A better readiness model treats readiness as a small set of distinct dimensions, each answering a different question about the application. The model below uses six, which is enough to capture the meaningful distinctions without becoming unwieldy:

Technical readiness — can the application run in a cloud target environment without significant rework?

Operational readiness — can the application be operated, monitored, supported, released, and recovered in a cloud environment?

Business readiness — does the business want or need this application to move now, and is its value proportionate to the migration effort?

Lifecycle readiness — where is the application in its lifecycle, and does that lifecycle position make migration sensible?

Dependency readiness — can the application move independently, or is it bound up with systems that are not ready?

Regulatory and jurisdictional readiness — does the application handle data subject to residency, regulatory, or contractual constraints that affect target landing zones, hosting region, or controls?

Treating these as separate dimensions matters because applications fail readiness for very different reasons. An application that is technically pristine but regulatorily constrained is a different problem from one that is operationally weak but business critical. A single composite score hides those distinctions; a multi-dimensional model exposes them.

Defining measurable criteria, not opinions

The next step is to define what each dimension means in your environment. This is where many readiness exercises drift, because the criteria become subjective and the ratings become inconsistent across teams.

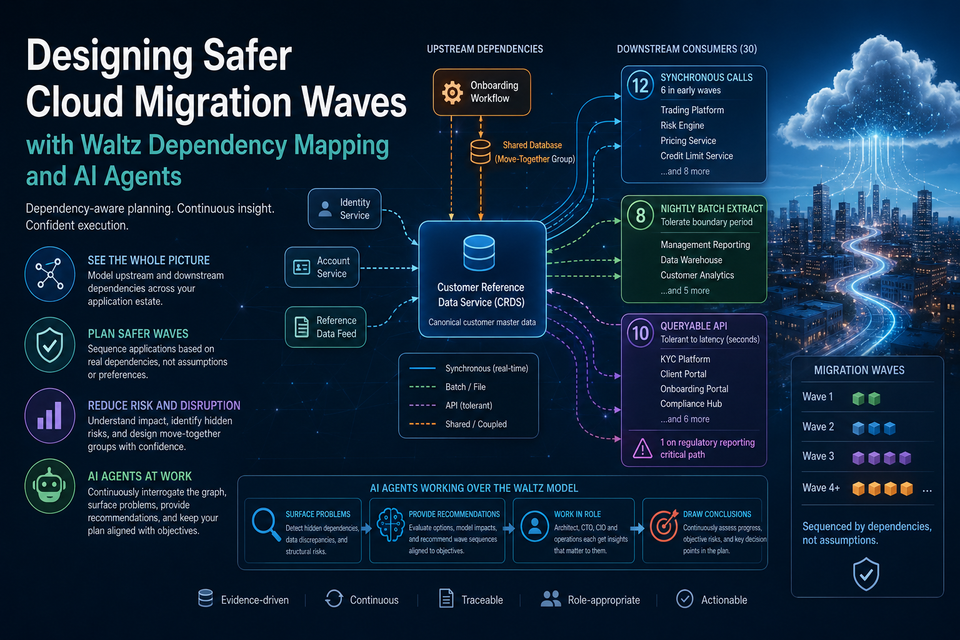

Waltz supports this through its assessment and rating model. For each dimension, you define a small set of measurable criteria, and for each criterion you define a rating scheme. The discipline is in the definitions. A rating of Green for technical readiness should mean the same thing whether the application is owned by a trading platform team or a back-office team. That requires written criteria.

The criteria below are illustrative rather than prescriptive, every estate will adapt them but they give a sense of what each dimension should actually capture.

Technical readiness criteria typically include current hosting pattern, operating system and database supportability, application architecture style, integration complexity, use of unsupported or legacy components, environment consistency across dev/test/prod, deployment automation maturity, and performance and resilience characteristics. The useful question is not "can this application be lifted into the cloud" but "can it operate safely, supportably, and economically in the proposed target environment".

Operational readiness criteria typically include clarity of ownership, support model maturity, monitoring and alerting coverage, incident and problem management, backup and recovery posture, disaster recovery expectations, release management maturity, runbook availability, and service-level expectations. Cloud migration does not remove operational responsibility, it usually makes weak operating models more visible.

Business readiness criteria typically include the business capability supported, criticality to revenue or regulatory obligations, user and business-unit reach, strategic importance, alignment with future roadmaps, duplication with other systems, business owner commitment, and any in-flight initiatives that compete for change capacity.

Lifecycle readiness criteria typically include the application's current lifecycle state, planned decommissioning dates, modernisation initiatives already in flight, and the likely future shape of the capability the application supports.

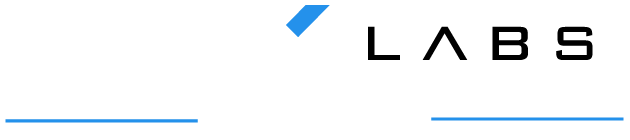

Dependency readiness criteria typically include the number and criticality of upstream and downstream dependencies, interface and protocol types, batch versus real-time flows, shared infrastructure dependencies, cross-border data flows, testing complexity, and required migration grouping. In Waltz these are modelled as relationships rather than notes, which is what makes dependency-aware planning possible.

Regulatory and jurisdictional readiness criteria typically include data classification, presence of personal or regulated data, jurisdictional and country-specific hosting requirements, data residency obligations, regulatory exposure, required controls, target landing-zone suitability, and the evidence required for post-migration assurance. As the case study showed, this dimension is often the one that determines not whether an application can move, but where it can land.

The point is not that your criteria must look exactly like these. The point is that they must be written down, agreed, and applied consistently — so that ratings can be defended. A rating that can be explained is a rating that can be governed.

A rating scheme that maps to action

A traffic-light scale is fine for a first pass, but readiness ratings become more useful when they map directly onto migration treatments. A scheme that works well in practice uses these eight values:

Ready: meets criteria across all dimensions; suitable for near-term migration.

Ready with conditions: broadly ready, but with specific conditions to satisfy first (a confirmed change window, a sign-off, a contained dependency).

Requires remediation: needs targeted fixes before migration: a runtime upgrade, a monitoring gap closed, an ownership clarified.

Requires redesign: fundamentally not cloud-suitable in current form; refactor or replatform required.

Candidate for replacement: better served by a SaaS or strategic platform than by migration.

Candidate for retirement: no longer justifies investment; should be decommissioned rather than moved.

Retain for now: should remain on-premise, at least for the foreseeable future, due to cost, contractual, or technical constraints.

Not suitable for migration: hard blocker present (regulatory, technical, contractual) that cannot currently be resolved.

The advantage of this scheme over a simple Ready/Not Ready split is that it forces the assessment to land on a treatment rather than a verdict. The output of the readiness model is a portfolio sorted by what should happen to each application, not just whether it passes or fails.

Scoring applications consistently

With the dimensions, criteria, and rating scheme defined, scoring becomes a structured activity rather than a workshop debate.

Waltz allows assessments to be applied at the application level, captured against a defined scheme, and surfaced alongside the rest of the architecture context; ownership, business capabilities, data flows, dependencies, and lifecycle. That matters because the readiness rating is rarely the only piece of information needed to make a decision. It is one input into a wider model.

In practice, scoring works best when it is done in waves rather than all at once. A first pass establishes a baseline across the portfolio using the information already known; often from existing CMDBs, application inventories, architecture documents, and owner interviews. This is enough to separate obvious migration candidates from obvious blockers, and to identify the population of applications that need deeper assessment.

A second pass focuses effort where it matters: applications that are borderline, applications with significant business or regulatory weight, and applications whose dependencies make them pivotal in the migration sequence. These are the applications where a wrong rating is most expensive.

The aim is not perfection on day one. It is a consistent, traceable, and improvable view of readiness across the estate.

A worked example

Consider a regional reporting application, call it RRA, sitting in a financial services estate. On a server inventory it looks unremarkable: a supported runtime, a standard database, a familiar hosting pattern. A naive assessment would mark it as a strong rehost candidate.

The multi-dimensional model tells a different story.

· Technical readiness: Green. Supported runtime, recent database version, already containerised in non-production.

· Operational readiness: Amber. Ownership is split between two teams after a recent reorganisation. Monitoring exists but alert routing is unclear.

· Business readiness: Green. Used daily by a regulated reporting function; clear business owner; on the strategic roadmap.

· Lifecycle readiness: Green. Mid-lifecycle, no decommissioning plans, modernisation roadmap aligned with cloud target.

· Dependency readiness: Red. Receives an overnight feed from a legacy mainframe extract that is itself out of scope for migration in the current wave. A downstream regulatory reporting platform consumes its output on a fixed daily cycle.

· Regulatory readiness: Amber. Handles data subject to a specific country's residency requirements. The target landing zone in that region is provisioned but has not yet been certified for this data class.

The composite rating is Ready with conditions. The conditions are concrete: resolve the ownership ambiguity, complete landing-zone certification, and either sequence the upstream feed appropriately or build a temporary bridge for the migration window. The application is not blocked, but it is not a first-wave candidate either. It belongs in a later wave, after a small remediation backlog and a specific governance step.

A single-score model would have either overstated readiness (Green technically, therefore go) or understated it (Red on dependency, therefore stop). The multi-dimensional model produces the right answer: yes, but later, and here is exactly what has to happen first.

How the dimensions feed treatment decisions

Once each application has been assessed across the six dimensions, the rating scheme above turns the assessment into a migration treatment. The flow is straightforward in principle:

The architecture context Waltz already holds: ownership, capabilities, data flows, dependency relationships; is what allows the rating to translate into a defensible treatment. Without that context, the rating is just an opinion. With it, the rating becomes a decision.

Separating candidates from blockers

Once the assessments are in place, the portfolio begins to sort itself.

Strong migration candidates emerge as applications that rate well across most or all dimensions: technically suitable, operationally mature, business-aligned, mid-lifecycle, dependency-light, and free of regulatory blockers. These are the applications that justify early migration waves. They tend to deliver value quickly and build programme confidence.

Blockers emerge in two flavours. The first are hard blockers: regulatory constraints that have not yet been resolved, lifecycle decisions that have not been made, or technical conditions that genuinely prevent migration. These applications do not belong in near-term waves regardless of how attractive they otherwise look. The second are soft blockers: applications where readiness can be improved with targeted remediation; a runtime upgrade, a dependency redesign, an ownership clarification. These are candidates for a remediation backlog that runs alongside the migration programme.

The value of this separation is that it changes the conversation. Instead of asking "is this application ready", the question becomes "what would it take to make this application ready and is that worth doing". That is a much more useful question for portfolio planning.

Identifying low-ROI applications

A readiness model is also a quiet but effective way of surfacing applications that should not be migrated at all.

When an application rates poorly on lifecycle readiness i.e. close to retirement, declining usage, or already being replaced, and rates poorly on business readiness, the case for migration weakens significantly. Spending engineering effort, governance attention, and cloud spend on an application that will be decommissioned within a year or two is rarely defensible.

The same logic applies to applications with high technical remediation cost and modest business value. Migration is an investment decision. If the cost of getting an application cloud-ready exceeds the value of having it in the cloud, the right answer may be replace, retire, or retain on-premise until natural end of life.

Waltz makes this visible because the readiness ratings sit alongside cost, ownership, and lifecycle data. Low-ROI applications are not hidden in a separate analysis; they are visible in the same model used for everything else. That makes it much harder to default to "migrate it anyway" simply because it is on the list.

Using readiness to support portfolio-level migration planning

The end goal of the readiness model is not the ratings themselves. It is the migration plan they enable.

Readiness ratings, when combined with the dependency and ownership information Waltz already holds, support several portfolio-level decisions:

· Wave design. Strong candidates with clean dependencies form the early waves. Applications with shared dependencies are sequenced together. Applications with hard blockers are deferred until those blockers are resolved.

· Strategy selection. Readiness across the dimensions points towards the right "R" for each application: Rehost, Replatform, Refactor, Replace, Retain, or Retire. Rather than treating every application as a move candidate.

· Investment focus. Remediation effort can be directed at applications where it materially changes readiness and where the business case justifies it, rather than spread thinly across the estate.

· Risk management. Applications with regulatory or jurisdictional constraints can be planned into the right landing zones from the start, rather than discovered late and re-planned under pressure.

· Governance and assurance. Because the ratings are defined, traceable, and connected to the rest of the architecture model, they support both pre-migration decisions and post-migration validation. The case study showed how this same connected model supports jurisdictional landing-zone assurance after go-live.

This is what turns a readiness assessment from an analytical exercise into a planning tool.

Readiness is a living model, not a one-off exercise

The final point worth making is that readiness changes.

An application that is not ready today may be ready in six months, after a runtime upgrade or an ownership clarification. A system marked as a strong migration candidate may be reclassified as a replacement candidate when a SaaS strategy emerges. A regulatory change may shift a target landing zone. A newly discovered dependency may move an application from Wave 2 to Wave 4.

This is where Waltz earns its place over a spreadsheet. Assessments, ratings, ownership, dependencies, and lifecycle states can be reviewed and updated as the programme progresses, and the portfolio view updates with them. In our Waltz deployments we go further, codifying underlying criteria so that signals from connected systems can refresh the model directly. Decisions made six months ago can be revisited with the evidence still attached. New applications can be assessed against the same criteria as everything else.

A readiness model that is captured once and frozen ages quickly. A readiness model that lives alongside the rest of the architecture continues to be useful for the duration of the programme, and beyond it, into the next round of portfolio decisions.

A practical model, not a heavyweight one

The readiness model described here is deliberately practical. It is not a maturity framework, not a scoring methodology imported from elsewhere, and not a governance layer bolted on top of the migration programme. It is a small, consistent set of assessments captured in Waltz alongside the architecture context the organisation already needs.

The reason this works is the same reason the broader architecture-first argument works. Cloud migration decisions are architecture decisions. They depend on understanding applications in context: their business role, their condition, their dependencies, their lifecycle, and their regulatory exposure. A readiness model that captures those dimensions consistently and surfaces them alongside everything else Waltz already knows about the estate, gives migration teams a defensible basis for deciding what moves, what changes, what stays, and what stops.

The goal is not simply to move applications to the cloud. It is to move the right applications, in the right order, for the right reasons, with the right controls.

That is what the case study client needed. It is what most large estates need. And it is achievable without building yet another spreadsheet.

HMx Labs helps organisations use Waltz to assess cloud readiness, map dependencies, and build evidence-based migration plans across complex application portfolios. If you are planning a large-scale migration and want to move beyond spreadsheet-led readiness assessment, get in touch.